Instructions to use YannQi/R-4B with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use YannQi/R-4B with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("image-text-to-text", model="YannQi/R-4B", trust_remote_code=True) messages = [ { "role": "user", "content": [ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/p-blog/candy.JPG"}, {"type": "text", "text": "What animal is on the candy?"} ] }, ] pipe(text=messages)# Load model directly from transformers import AutoModel model = AutoModel.from_pretrained("YannQi/R-4B", trust_remote_code=True, dtype="auto") - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use YannQi/R-4B with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "YannQi/R-4B" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "YannQi/R-4B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker

docker model run hf.co/YannQi/R-4B

- SGLang

How to use YannQi/R-4B with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "YannQi/R-4B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "YannQi/R-4B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "YannQi/R-4B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "YannQi/R-4B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }' - Docker Model Runner

How to use YannQi/R-4B with Docker Model Runner:

docker model run hf.co/YannQi/R-4B

R-4B: Incentivizing General-Purpose Auto-Thinking Capability in MLLMs via Bi-Mode Annealing and Reinforce Learning

[📚 Arxiv Paper] [🤗 Hugging Face] [🤖️ ModelScope] [💻 Code]

⭐️ Introduction

In this repo, we present R-4B, a multimodal large language model designed for general-purpose auto-thinking, autonomously switching between step-by-step thinking and direct response generation based on task complexity. This capability enables R-4B to deliver high-quality responses while significantly improving inference efficiency and reducing computational costs.

The development of R-4B follows a two-stage training paradigm: (1) Bi-mode Annealing, which establishes both thinking and non-thinking capabilities for VQA; and (2) Bi-mode Policy Optimization (BPO), which enables the model to adaptively switch between thinking and non-thinking modes based on input demands.

🚀 Key Features

🧠 Think Smart, Act Fast: Adaptive & Controllable Thinking! Our model provides three-mode control over the response process.

- Auto-thinking Mode: Unleash auto-thinking that works across general topics, from simple Q&A to complex scientific analysis. It saves time and computation by thinking only when it matters.

- Support Manual Control: Explicitly command the model to use its

thinkingornon-thinkingcapabilities, enabling you to make your choices for every job.

🏆 Strong Performance, Open for Everyone! Our model is now fully open-source. It achieves state-of-the-art performance among models of comparable size.

📢 News

- [2025.08.20] 🚀 vLLM Support is Here! Our R-4B model is now fully compatible with vLLM for high-performance inference.

- [2025.08.18] 🏆 Top Rank Achieved! We are thrilled to announce that R-4B is now ranked #1 among all open-source models on the OpenCompass Multi-modal Reasoning Leaderboard!

- [2025.08.11] 🥇 Rank #1! R-4B ranks first under 20B parameters on the OpenCompass Multi-modal Academic Leaderboard!

- [2025.08.05] 🎉 R-4B is Released! Our model is now publicly available. You can download it from Hugging Face.

🔥 Quickstart

Below, we provide simple examples to show how to use R-4B with 🤗 Transformers.

Using 🤗 Transformers to Chat

Users can dynamically control the model's response by selecting one of three modes (

auto-thinking,thinking, ornon-thinking) withthinking_mode.thinking_mode=autoforauto-thinkingmode;thinking_mode=longforthinkingmode;thinking_mode=shortfornon-thinkingmode. Default isauto-thinking.

import requests

from PIL import Image

import torch

from transformers import AutoModel, AutoProcessor

model_path = "YannQi/R-4B"

# Load model

model = AutoModel.from_pretrained(

model_path,

torch_dtype=torch.float32,

trust_remote_code=True,

).to("cuda")

# Load processor

processor = AutoProcessor.from_pretrained(model_path, trust_remote_code=True)

# Define conversation messages

messages = [

{

"role": "user",

"content": [

{

"type": "image",

"image": "http://images.cocodataset.org/val2017/000000039769.jpg",

},

{"type": "text", "text": "Describe this image."},

],

}

]

# Apply chat template

text = processor.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

thinking_mode="auto"

)

# Load image

image_url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(image_url, stream=True).raw)

# Process inputs

inputs = processor(

images=image,

text=text,

return_tensors="pt"

).to("cuda")

# Generate output

generated_ids = model.generate(**inputs, max_new_tokens=16384)

output_ids = generated_ids[0][len(inputs.input_ids[0]):]

# Decode output

output_text = processor.decode(

output_ids,

skip_special_tokens=True,

clean_up_tokenization_spaces=False

)

# Print result

print("Auto-Thinking Output:", output_text)

Using vLLM for fast R-4B deployment and inference.

- We recommend using vLLM for fast R-4B deployment and inference.

Install

The code of R-4B requires the newest vllm now. Please install from local source:

git clone https://github.com/vllm-project/vllm.git

cd vllm

VLLM_USE_PRECOMPILED=1 uv pip install --editable .

Online Serving

The

thinking_modeswitch is also available in APIs created by vLLM. Default isauto-thinking.

- Serve

vllm serve \

yannqi/R-4B \

--served-model-name r4b \

--tensor-parallel-size 8 \

--gpu-memory-utilization 0.8 \

--host 0.0.0.0 \

--port 8000 \

--trust-remote-code

- Openai Chat Completion Client

import base64

from PIL import Image

from openai import OpenAI

# Set OpenAI's API key and API base to use vLLM's API server.

openai_api_key = "EMPTY"

openai_api_base = "http://localhost:8000/v1"

client = OpenAI(

api_key=openai_api_key,

base_url=openai_api_base,

)

# image url

image_messages = [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "http://images.cocodataset.org/val2017/000000039769.jpg"

},

},

{"type": "text", "text": "Describe this image."},

],

},

]

chat_response = client.chat.completions.create(

model="r4b",

messages=image_messages,

max_tokens=16384,

extra_body={

"chat_template_kwargs": {"thinking_mode": "auto"},

},

)

print("Chat response:", chat_response)

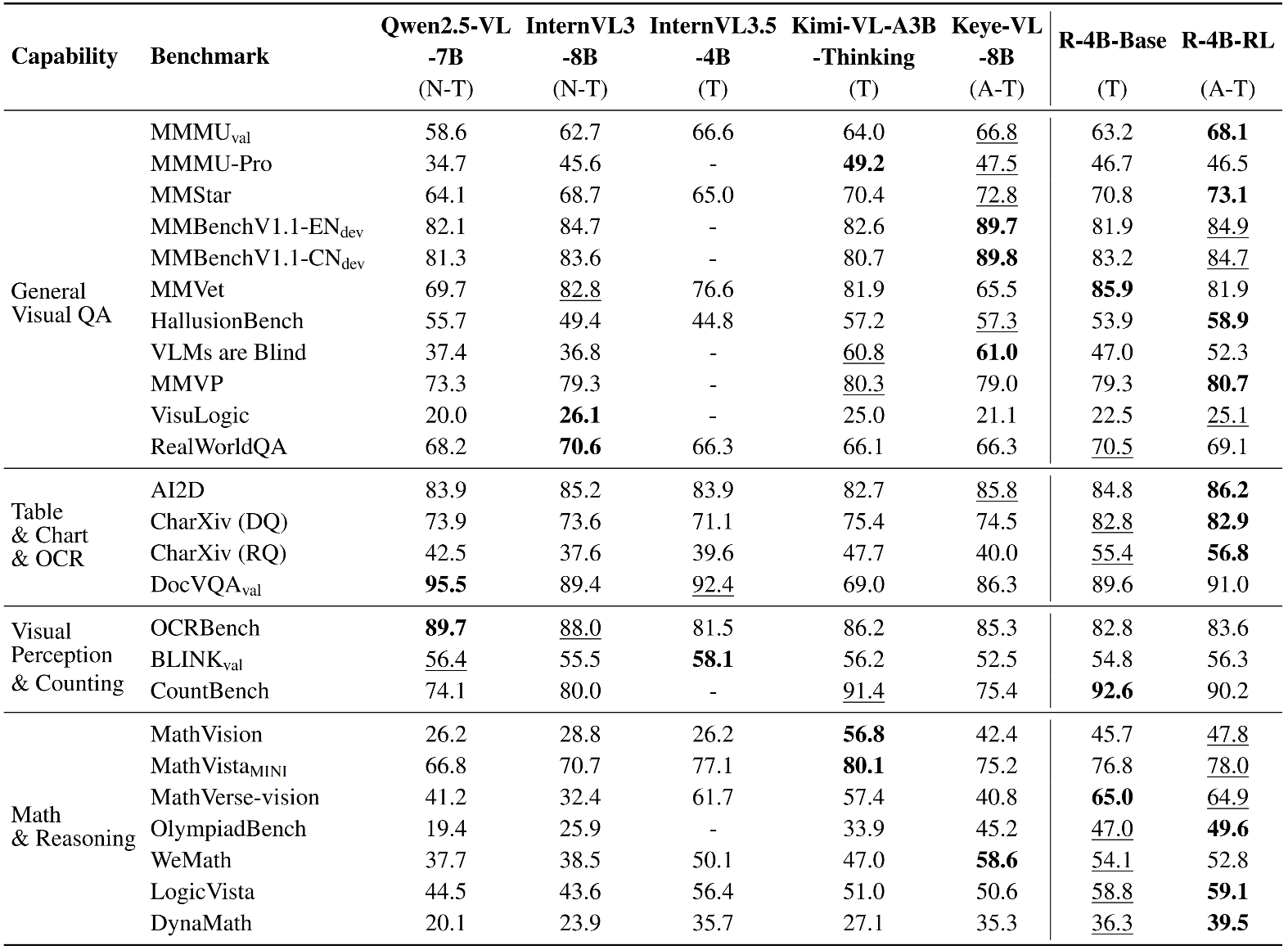

📈 Experimental Results

- R-4B establishes itself with powerful, state-of-the-art perceptual abilities that are competitive with larger models.

- In evaluation sets that require complex logical reasoning and mathematical problem-solving, such as WeMath, MathVerse, and LogicVista, R-4B displays a strong performance curve. This highlights its advanced adaptive thinking capacity for logical deduction and solving complex quantitative problems.

✒️ Citation

@misc{yang2025r4bincentivizinggeneralpurposeautothinking,

title={R-4B: Incentivizing General-Purpose Auto-Thinking Capability in MLLMs via Bi-Mode Annealing and Reinforce Learning},

author={Qi Yang and Bolin Ni and Shiming Xiang and Han Hu and Houwen Peng and Jie Jiang},

year={2025},

eprint={2508.21113},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2508.21113},

}

Acknowledgements

R-4B is developed based on the codebases of the following projects: LLaVA-Next, SigLIP2, Qwen3, Qwen2.5-VL, VLMEvalKit. We sincerely thank these projects for their outstanding work.

- Downloads last month

- 170,857

Model tree for YannQi/R-4B

Base model

Qwen/Qwen3-4B-Base